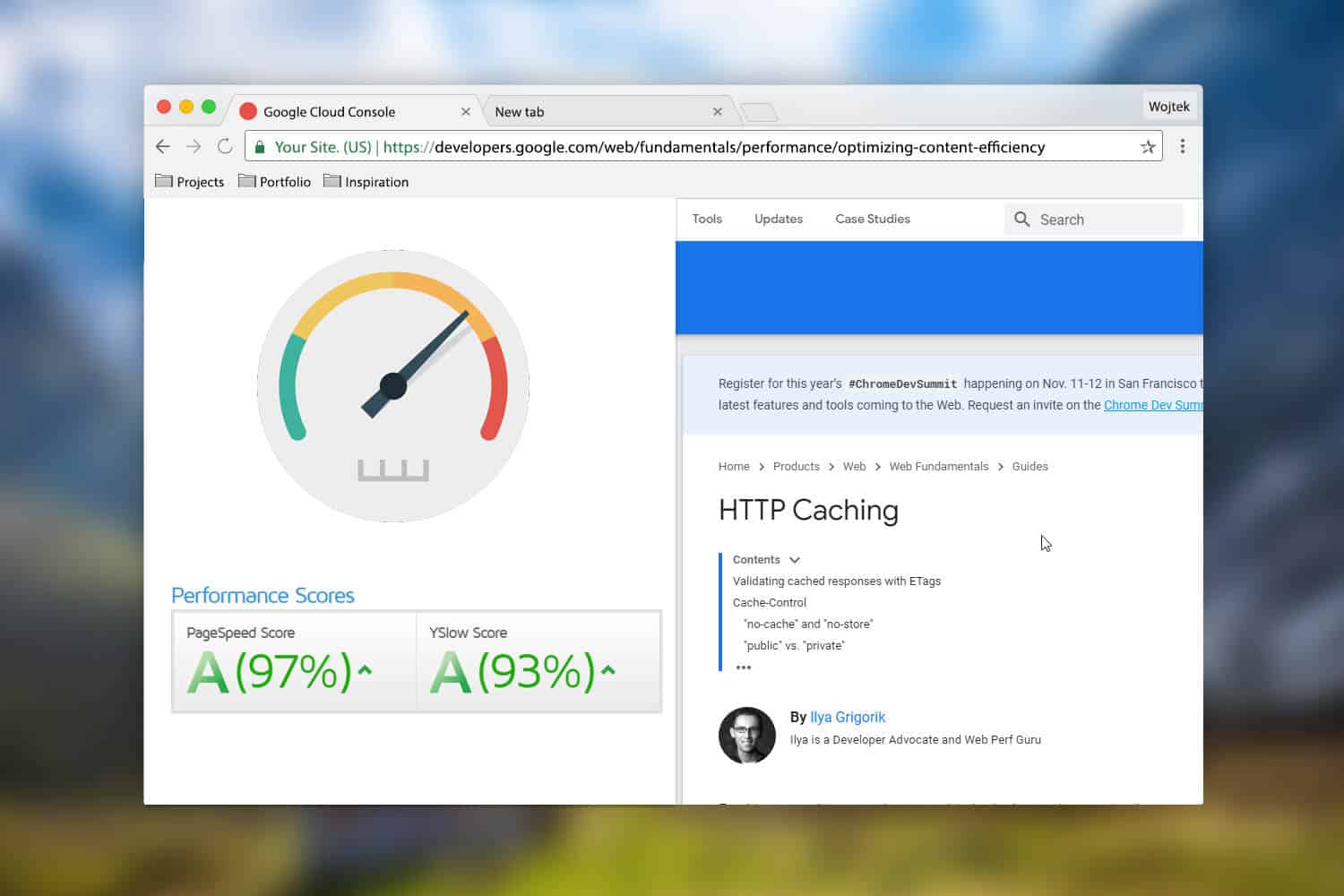

Additionally, if the database becomes unavailable, client applications might be able to continue by using the data that's held in the cache.Ĭonsider caching data that is read frequently but modified infrequently (for example, data that has a higher proportion of read operations than write operations). Retrieving data from a shared cache, however, rather than the underlying database, makes it possible for a client application to access this data even if the number of available connections is currently exhausted. Caching reduces the latency and contention that's associated with handling large volumes of concurrent requests in the original data store.įor example, a database might support a limited number of concurrent connections. The more data that you have and the larger the number of users that need to access this data, the greater the benefits of caching become. Decide when to cache dataĬaching can dramatically improve performance, scalability, and availability. The following sections describe in more detail the considerations for designing and using a cache. The requirement to implement a separate cache service might add complexity to the solution.

The cache is slower to access because it's no longer held locally to each application instance.There are two main disadvantages of the shared caching approach: You can easily scale the cache by adding more servers. The underlying infrastructure determines the location of the cached data in the cluster. An application instance simply sends a request to the cache service. Many shared cache services are implemented by using a cluster of servers and use software to distribute the data across the cluster transparently. It locates the cache in a separate location, which is typically hosted as part of a separate service, as shown in Figure 2.Īn important benefit of the shared caching approach is the scalability it provides. Shared caching ensures that different application instances see the same view of cached data.

If you use a shared cache, it can help alleviate concerns that data might differ in each cache, which can occur with in-memory caching. Therefore, the same query performed by these instances can return different results, as shown in Figure 1.įigure 1: Using an in-memory cache in different instances of an application. If this data isn't static, it's likely that different application instances hold different versions of the data in their caches. Think of a cache as a snapshot of the original data at some point in the past. If you have multiple instances of an application that uses this model running concurrently, each application instance has its own independent cache holding its own copy of the data. This process will be slower to access than data that's held in memory, but it should still be faster and more reliable than retrieving data across a network. If you need to cache more information than is physically possible in memory, you can write cached data to the local file system.

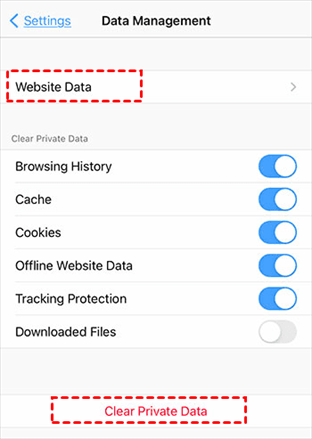

The size of a cache is typically constrained by the amount of memory available on the machine that hosts the process. It can also provide an effective means for storing modest amounts of static data. It's held in the address space of a single process and accessed directly by the code that runs in that process. The most basic type of cache is an in-memory store. Server-side caching is done by the process that provides the business services that are running remotely. Client-side caching is done by the process that provides the user interface for a system, such as a web browser or desktop application. In both cases, caching can be performed client-side and server-side. They use a shared cache, serving as a common source that can be accessed by multiple processes and machines.They use a private cache, where data is held locally on the computer that's running an instance of an application or service.It's far away when network latency can cause access to be slow.ĭistributed applications typically implement either or both of the following strategies when caching data:.It's subject to a high level of contention.It's slow compared to the speed of the cache.If this fast data storage is located closer to the application than the original source, then caching can significantly improve response times for client applications by serving data more quickly.Ĭaching is most effective when a client instance repeatedly reads the same data, especially if all the following conditions apply to the original data store: It caches data by temporarily copying frequently accessed data to fast storage that's located close to the application. Caching is a common technique that aims to improve the performance and scalability of a system.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed